Based Syst.This paper presents how the application of the STPA method might support the evaluation of fighter pilots training programs and trigger procedural and technological changes. Zheng, H., Deng, Y., Hu, Y.: Fuzzy evidential influence diagram and its evaluationalgorithm.

Yang, Q., Zhang, J., Shi, G., et al.: Maneuver decision of UAV in short-range air combat based on deep reinforcement learning. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. Van Hasselt, H., Guez, A., Silver, D.: Deep reinforcement learning with double q-learning. Sun, Z., et al.: Multi-agent hierarchical policy gradient for air combat tactics emergence via self-play. Schulman, J., Wolski, F., Dhariwal, P., Radford, A., Klimov, O.: Proximal policy optimization algorithms. In: 2020 Chinese Automation Congress (CAC), pp. Qiu, X., Yao, Z., Tan, F., Zhu, Z., Lu, J.G.: One-to-one air-combat maneuver strategy based on improved TD3 algorithm. In: 2021 International Conference on Unmanned Aircraft Systems (ICUAS), pp. Pope, A.P., et al.: Hierarchical reinforcement learning for air-to-air combat. In: 2020 International Joint Conference on Neural Networks (IJCNN), pp. Piao, H., et al.: Beyond-visual-range air combat tactics auto-generation by reinforcement learning. Mnih, V., et al.: Human-level control through deep reinforcement learning. In: Mohamed Ali, M., Wahid, H., Mohd Subha, N., Sahlan, S., Md. Liu, P., Ma, Y.: A deep reinforcement learning based intelligent decision method for UCAV air combat. Lillicrap, T.P., et al.: Continuous control with deep reinforcement learning. Heinrich, J., Silver, D.: Deep reinforcement learning from self-play in imperfect information games. In: International Conference on Machine Learning, pp. Heinrich, J., Lanctot, M., Silver, D.: Fictitious self-play in extensive-form games. Haarnoja, T., Zhou, A., Abbeel, P., Levine, S.: Soft actor-critic: off-policy maximum entropy deep reinforcement learning with a stochastic actor. Haarnoja, T., Tang, H., Abbeel, P., Levine, S.: Reinforcement learning with deep energy-based policies. In: 2022 IEEE 17th International Conference on Control & Automation (ICCA), pp. Guo, J., et al.: Maneuver decision of UAV in air combat based on deterministic policy gradient. 6(1), 2167–2374 (2016)įujimoto, S., Hoof, H., Meger, D.: Addressing function approximation error in actor-critic methods. Technical report (1986)Įrnest, N., Carroll, D., Schumacher, C., et al.: Genetic fuzzy based artificial intelligence for unmanned combat aerial vehicle control in simulated air combat missions. arXiv preprint arXiv:1710.03748 (2017)īurgin, G.H.: Improvements to the adaptive maneuvering logic program.

Keywordsīansal, T., Pachocki, J., Sidor, S., Sutskever, I., Mordatch, I.: Emergent complexity via multi-agent competition. The result of the 1v1 BVR air combat problem shows that the improved NFSP-BRHC algorithm outperforms both the NFSP and the Self-Play (SP) algorithms. These two components helped our algorithm to achieve efficient training in the high-fidelity simulation environment. Our training algorithm improves Neural Fictitious Self-Play (NFSP) and proposes the best response history correction (BRHC) version of NFSP. Our decision-making model uses the Soft actor-critic (SAC) algorithm, a method based on maximum entropy, as the action control of the reinforcement learning part, and introduces an action mask to achieve efficient exploration. To address this problem, we propose a reinforcement learning self-play training framework to solve it from two aspects: the decision model and the training algorithm. The complexity of action and state space in this game makes it difficult to learn high-level air combat strategies from scratch.

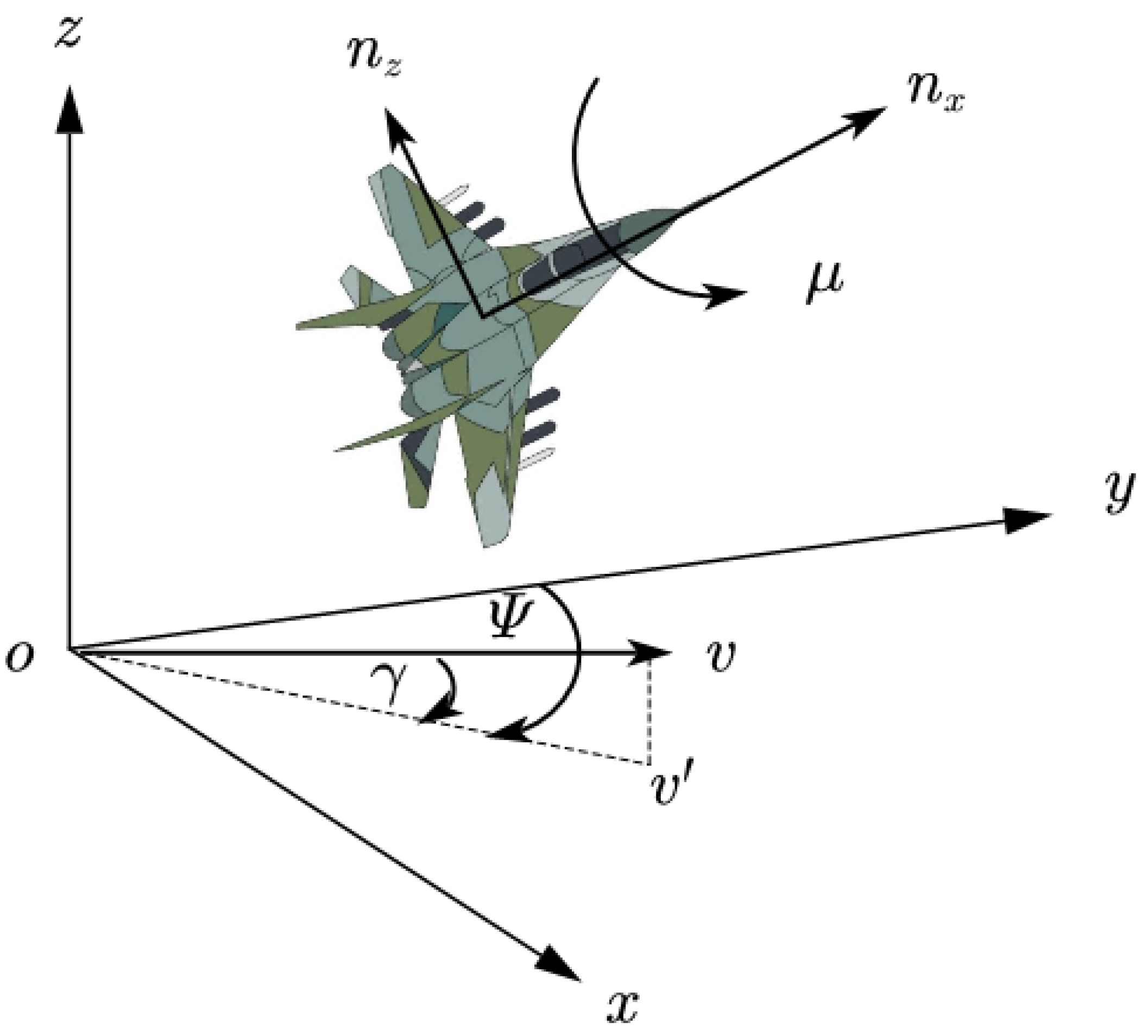

In contrast to most reinforcement learning problems, 1v1 BVR air combat belongs to the class of two-player zero-sum games with long decision-making periods and sparse rewards. We study the problem of utilizing reinforcement learning for action control in 1v1 Beyond-Visual-Range (BVR) air combat.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed